The GenAI Skill Data Professionals Need Most: Evaluation

Only by evaluating things that we know our system is good.

GenAI has made output generation cheap.

A decent prompt can already produce summaries, classifications, SQL explanations, insight drafts, documentation, and stakeholder-facing text.

The harder part is deciding:

Is the output correct?

Is it grounded in the input?

Is it consistent across realistic cases?

Would I trust it inside an actual workflow?

That is the opening for data professionals. Evaluation turns GenAI from a demo into something that can be used responsibly in real work.

OpenAI’s current eval guidance makes the same distinction: the useful kind of eval is not a public benchmark. It is a task-specific test for the application you are building.

By the end of this article, you should have a clearer view of what evaluation means in practice, what to test for, and why data professionals are well-positioned to own this skill.

Curious about it? Let’s get into it.

GenAI output is easy. Trusting it is not.

Prompting gets attention because it is visible.

Evaluation matters more because it decides whether the work holds up.

Most professionals first experience GenAI as a productivity tool: ask, receive, refine. That makes it tempting to treat a fluent output as a good output.

But GenAI systems are variable.

The same system can behave differently across prompts, data slices, and edge cases.

In professional settings, the cost of a wrong answer is higher than the cost of a slightly worse prompt.

That is why evaluation matters.

What does evaluation actually mean in real work?

Evaluation here does not mean comparing GPT-5.5 against Claude on a benchmark.

It means testing a GenAI workflow against the task’s standards.

The standard is to separate broad model benchmarks from specific evaluations you design for your own application. Google’s evaluation documentation also frames this as a test-driven process: define the task, prepare evaluation data, choose the quality criteria, and inspect results.

Consider workflows that data professionals might evaluate:

A GenAI assistant that summarizes KPI movements

A RAG system answering questions from internal policy documents

A classifier that tags customer feedback or support tickets

A model that explains SQL output to business users

These are not abstract research problems. They are real tasks that need real testing.

The four questions every GenAI workflow should answer

The heart of evaluation is not a long taxonomy of metrics, as it comes down to these four questions:

A. Did it complete the task?

This means that if the GenAI application can perform the task it was asked to do, such as classifying the ticket into the allowed categories or summarizing the table instead of restating it.

In technical terms, this is often measured through deterministic checks: regex matching for expected formats, JSON schema validation, or exact-match accuracy against a predefined label set.

Exact Match = 1 if output matches ground truth, else 0

This is the simplest evaluation dimension, but also the most often skipped.

B. Is it correct and grounded?

Did it stay faithful to the source data, retrieved context, or provided evidence?

This is especially important for RAG systems, analytical summaries, and policy assistants.

Basic text overlap metrics such as ROUGE or BLEU are not sufficient here. They check surface-level word similarity but miss meaning.

A stronger approach is to compare the output and source in the embedding space. The standard metric for that is cosine similarity:

Cosine Similarity(A, B) = (A · B) / (||A|| × ||B||)

A high cosine similarity means the generated text is semantically close to the source. A low score may indicate the model drifted or fabricated information.

For RAG systems specifically, you also need to check whether the retrieval step actually found the right documents before the model started generating. That is where Recall@K matters:

Recall@K = |Relevant Documents ∩ Top-K Retrieved| / |Relevant Documents|

If the retriever misses the relevant source, even a perfect generator will produce the wrong answer.

These are examples of some metrics from the Generative AI metrics.

C. Is the quality consistent across realistic cases?

A single good example proves very little.

The system should be tested against various cases, such as:

Easy cases

Ambiguous cases

Incomplete inputs

Edge cases

Cases where the correct response is “not enough information.”

You already know not to trust a model based on a single clean validation sample.

GenAI deserves the same discipline. That means building automated test suites that run every time the prompt, model version, or pipeline changes.

D. How does it fail?

This is the most important professional instinct.

A workflow that fails clearly is easier to manage than one that sounds confident while being wrong.

A good evaluation should reveal things such as:

Unsupported claims

Fabricated numbers

Hidden assumptions

Formatting failures

Misleading simplifications

This is where logging and tracing tools become important. Capturing inputs and outputs systematically lets you identify failure patterns instead of guessing.

The job is not to prove that the system works. The job is to learn where it fails before someone depends on it.

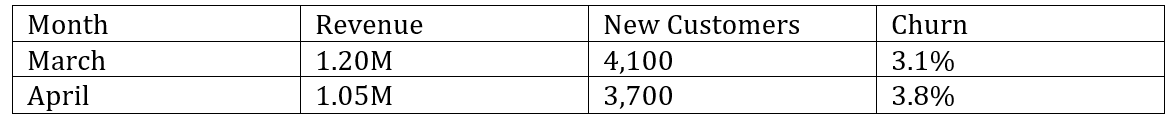

An example: evaluating a KPI commentary assistant

To make this concrete, consider a workflow that many data teams will recognize.

A GenAI assistant receives a monthly KPI table and writes a short business commentary:

The expected output should:

Identify that revenue declined

Mention lower acquisition and higher churn

Avoid claiming causality unless supported

Keep the commentary concise and business-appropriate

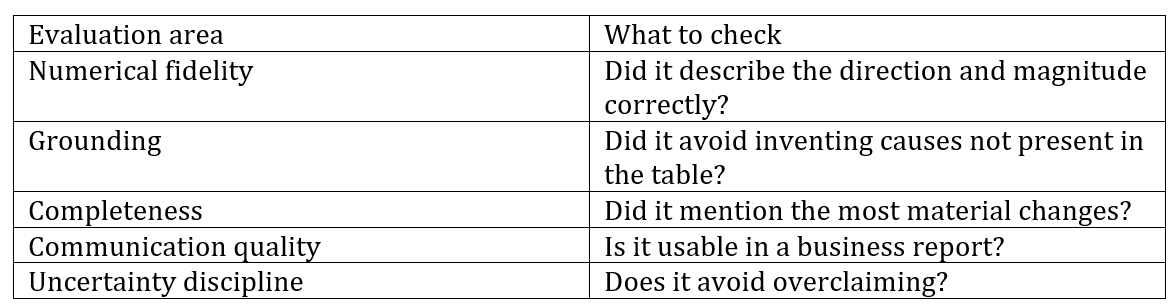

Then you evaluate:

This gives you something concrete to recognize in your own work. It also separates your evaluation from a generic evaluation.

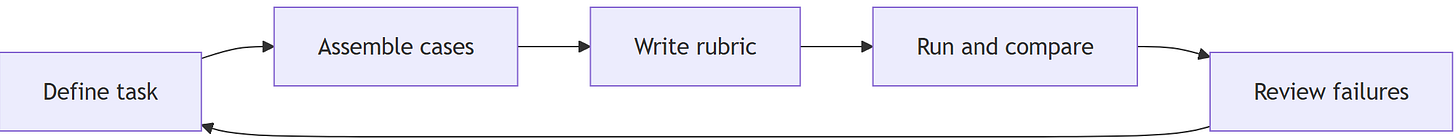

A simple evaluation loop for data professionals

You do not need a full evaluation framework to start.

A five-step loop is enough:

1. Define the job clearly. What should the GenAI system actually do?

2. Collect representative test cases. Not just the clean ones. Include strange, messy, and borderline cases.

3. Write a simple scoring rubric. For structured outputs, use exact-match checks. For subjective quality, use a binary (0/1) rubric or a short LLM-as-a-judge prompt.

4. Compare outputs across prompts, models, or versions against the same cases. Eval guidance emphasizes using evals to test and iterate rather than relying on ad hoc inspection.

5. Record recurring failures. The failure pattern matters more than one overall score.

The evaluation stack reflects this general pattern as well: evaluation datasets, rubric-based measures, deterministic metrics where suitable, and custom checks for task-specific requirements.

Why data professionals are well-positioned to own this

Evaluation is not strange to data professionals.

It draws on habits we already use:

Defining success criteria

Building representative samples

Distinguishing anecdotes from evidence

Checking edge cases

Analyzing errors instead of celebrating one good result

Deciding whether an output is fit for use

Data professionals already have much of the mindset evaluation requires.

The shift is applying that discipline to generative systems, not only predictive models.

That is why evaluation may become one of the most valuable GenAI-adjacent skills for analysts, data scientists, analytics engineers, and ML practitioners.

Conclusion

Evaluation is what turns GenAI from something impressive into something dependable. For data professionals, that matters more than learning a clever prompt pattern or chasing the newest model release.

The real advantage comes from knowing how to define good output, test it against realistic cases, trace where it fails, and decide whether it is ready to support actual work.

That habit is already close to how strong data professionals think: establish the standard, examine the evidence, and avoid trusting a result just because it looks convincing. GenAI simply gives that discipline a new place to matter. As these systems move deeper into analysis, reporting, search, and decision support, the professionals who can evaluate them well will be the ones who make them genuinely useful.